Companies investing billions in AI infrastructure

$650 billion. That’s not a government budget. That’s not a multi-decade infrastructure programme. That’s what the world’s biggest tech companies are expected to pour into AI infrastructure by 2026 alone.

Let that land for a second.

We’re watching the largest voluntary concentration of capital in corporate history and it’s accelerating. Microsoft, Amazon, Google, Meta, xAI, Oracle, Anthropic. Each writing cheques with ten or eleven figures. Each betting that the company who owns the compute, owns the future.

But here’s the tension nobody can quite resolve: is this genuine vision the construction of a new technological civilization or the most expensive case of competitive panic ever recorded? Are these companies building the roads and railways of the 21st century, or collectively buying lottery tickets at catastrophic scale?

One thing is certain. This is the infrastructure arms race defining the next decade. And it’s only just getting started.

Why Now? The Forces Driving the AI Infrastructure Boom

The short answer is demand — raw, relentless, and growing faster than anyone predicted. Training frontier AI models like OpenAI’s latest systems or Google’s Gemini requires staggering amounts of compute power. We’re not talking about running a few servers. We’re talking about purpose-built supercomputers consuming as much electricity as mid-sized cities, running continuously, just to push model capabilities incrementally forward. Every new model generation demands more compute than the last. The ceiling, so far, keeps moving up.

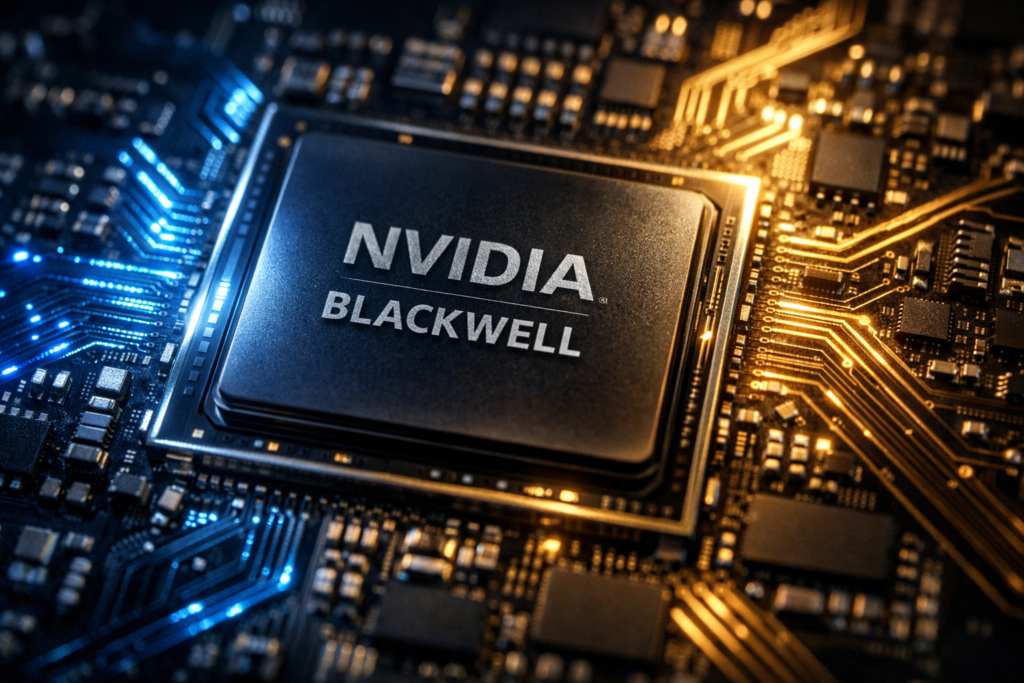

At the centre of this hardware frenzy sits one company: Nvidia. Its Blackwell architecture — particularly the GB200 GPU — has become the de facto gold standard for serious AI infrastructure. Meta is deploying 1.3 million Nvidia GPUs. Microsoft’s Fairwater cluster alone houses over 150,000 GB200s. xAI’s Colossus supercluster runs on more than 550,000 Nvidia chips. Demand for these processors is so intense that lead times stretch months and allocation has become a strategic advantage in itself. If you have the chips, you have power. Simple as that.

Then there’s the energy question — and it’s becoming impossible to ignore. Gigawatt-scale data centers aren’t a metaphor. They are literal gigawatt consumers, drawing power equivalent to large industrial facilities, around the clock. Companies are now securing long-term energy contracts, building on-site power generation, and scouting land in regions with reliable electricity grids. The AI boom isn’t just a software story. It’s a power infrastructure story, a land story, a water cooling story. The physical footprint of intelligence is enormous.

What makes this moment distinct from previous tech investment cycles is the timeline. Nvidia’s CEO Jensen Huang has stated plainly that this level of capital expenditure is expected to sustain for seven to eight years. This isn’t a sugar rush. The companies committing hundreds of billions aren’t expecting quick returns — they’re building the foundational layer of what they believe will be a transformed global economy. Whether they’re right is the question hanging over every dollar spent.

The Players & Their Bets — A Company-by-Company Breakdown

The numbers below aren’t projections from optimistic analysts. They’re commitments — announced, budgeted, and in many cases already under construction. Here’s who’s spending what, and why it matters.

| Company | 2025 Capex | 2026 Guidance | Key Focus |

| Microsoft | $80B | $150B+ | Azure AI, OpenAI clusters |

| Amazon (AWS) | $100B+ | $125B–$200B | Trainium chips, AI cloud |

| Alphabet/Google | $75B | $91–100B+ | TPU v7, Gemini models |

| Meta | $70–72B | $115–135B | Llama, 1.3M Nvidia GPUs |

| xAI | $10–12B | $20B raised (2026) | Colossus, Grok models |

| Oracle | $10B | Part of $500B Stargate | OCI regions, AI cloud |

| Anthropic | $50B | U.S. data centers TX/NY | Claude models, 1GW+ compute |

3a. Microsoft — $80 Billion and the OpenAI Partnership Machine

$80 billion in 2025 capex. Over $150 billion expected in 2026. Microsoft isn’t just investing in AI — it’s betting the entire company architecture on it.

The centrepiece is Stargate, a joint $100B+ supercomputer initiative with OpenAI that represents perhaps the most consequential corporate partnership in tech history. Alongside that sits Fairwater — an Azure cluster housing over 150,000 GB200 GPUs — and a sprawling global network of AI-optimised data centers feeding Azure’s cloud services.

Strategically, Microsoft’s play is clear: if OpenAI builds the most capable models, and Microsoft owns the infrastructure those models run on, it controls the enterprise AI layer for the next decade. Every Azure contract, every Copilot seat, every cloud migration runs on this foundation.

3b. Amazon (AWS) — Trainium Chips and the $200B Cloud Play

$100 billion+ committed for 2025, with guidance pointing toward up to $200 billion in total AI infrastructure investment by 2026. Amazon is playing a longer, quieter game than its rivals — and it might be the smartest bet of all.

While others race to secure Nvidia allocations, AWS is aggressively developing its own silicon. Trainium chips — Amazon’s custom AI accelerators — are designed to reduce dependency on Nvidia while offering competitive performance for cloud-based model training. It’s vertical integration as competitive moat.

The strategic logic is straightforward. AWS is the world’s dominant cloud provider. Every company building on AI needs compute. If Amazon can deliver that compute cheaper, faster, and at greater scale through proprietary chips and infrastructure, it cements cloud dominance for another generation.

3c. Alphabet/Google — The Quiet $100 Billion Commitment

$75 billion in 2025. Between $91–100 billion+ projected beyond that. Google invented the transformer architecture that makes modern AI possible — and it’s not about to cede the infrastructure race to anyone.

Google’s hardware edge is its TPU (Tensor Processing Unit) programme, now at v7, built specifically for training and serving large models like Gemini. Unlike competitors relying primarily on Nvidia, Google has a proprietary silicon advantage developed over a decade. Combined with DeepMind’s research capabilities and YouTube’s data scale, Google’s infrastructure investment isn’t just about compute — it’s about sustaining a full-stack AI advantage.

3d. Meta — 1.3 Million GPUs and Zuckerberg’s Infrastructure Obsession

$70–72 billion in 2025. Up to $135 billion projected for 2026, alongside plans for data centers exceeding 2 gigawatts of capacity. Mark Zuckerberg has been unusually explicit: Meta intends to build the most powerful AI infrastructure on the planet.

The numbers back the ambition. 1.3 million Nvidia GPUs. Llama — Meta’s open-source model family — as the vehicle for establishing AI dominance without a direct cloud product. The strategic angle is distinctive: by open-sourcing Llama, Meta commoditises model development while keeping its infrastructure advantage proprietary. The compute is the product.

3e. xAI — Colossus, Grok, and the Memphis Gamble

$10–12 billion in prior funding. A fresh $20 billion raise in 2026. Elon Musk’s xAI is the most aggressive newcomer in this race — and possibly the most audacious.

Colossus, xAI’s Memphis-based supercluster, already runs on over 550,000 Nvidia chips, with expansion underway. The goal is to build the world’s most powerful AI training facility, purpose-built for Grok model development. What makes xAI’s bet notable isn’t just scale — it’s speed. Colossus went from announcement to operational faster than any comparable facility in history. Musk is treating infrastructure like a product launch.

3f. Oracle & Anthropic — The Stargate Alliance and Claude’s Compute Backbone

Oracle — often overlooked in these conversations — is a critical piece of the Stargate puzzle. Its $10 billion 2025 investment is part of a broader $500 billion Stargate initiative alongside OpenAI and SoftBank, with over 100 OCI cloud regions providing the distribution layer for AI services at global scale.

Anthropic, meanwhile, is committing $50 billion toward U.S.-based data centers in Texas and New York, targeting over 1 gigawatt of compute capacity. For a company whose entire product is Claude — a model designed around safety and reliability — owning the infrastructure isn’t optional. It’s existential. You can’t promise enterprise-grade AI without controlling the compute it runs on.

What $650 Billion Actually Looks Like

Numbers with nine zeroes are easy to say and nearly impossible to comprehend. So let’s make it concrete.

$650 billion is roughly the entire GDP of Switzerland — spent in a single investment cycle, by a handful of private companies, on one technology category. It’s more than the United States spent building the Interstate Highway System, adjusted for inflation. It would fund NASA’s entire current budget for over thirty years. This is not incremental spending. This is civilisational-scale capital allocation.

The physical reality is equally staggering. These data centers demand gigawatts — not megawatts — of continuous power. A single large AI facility can draw as much electricity as a major metropolitan area. The land requirements span hundreds of acres. The cooling infrastructure alone — water systems, thermal management — rivals industrial manufacturing plants in complexity.

What amplifies the scale further is the partnership architecture surrounding individual bets. Microsoft and OpenAI aren’t simply collaborating — they’re co-constructing infrastructure neither could build alone. The Oracle-Stargate-SoftBank alliance pools capital, cloud distribution, and political relationships into a single coordinated force. These aren’t investments running in parallel. They’re interlocking systems, each one reinforcing the next, designed to make the entire edifice too large and too embedded to fail quietly.

The Risks Nobody Wants to Talk About

For all the confidence radiating from earnings calls and press releases, the risks here are real — and the people spending the money know it.

The most immediate concern is overcapacity. When every major player builds simultaneously at maximum scale, the collective supply of AI compute could outpace actual demand, at least in the near term. Data center overcapacity has happened before in tech — the aftermath is rarely pretty. Stranded assets, margin compression, frantic pivots. The difference this time is the zeros involved are considerably larger.

Energy and environmental pressures are hardening into genuine constraints. Gigawatt-scale power demands are already straining regional grids and attracting regulatory scrutiny. Water consumption for cooling is drawing environmental opposition in drought-prone regions. The sustainability narrative that Big Tech spent a decade carefully constructing is under serious pressure from the infrastructure demands of the AI boom it helped create.

Then there’s geopolitics. The overwhelming dependence on Nvidia chips — manufactured via TSMC in Taiwan — represents a supply chain vulnerability that no amount of capital expenditure can fully hedge. Export restrictions, semiconductor policy shifts, or regional instability could disrupt the entire ecosystem overnight.

Finally, the ROI question. These are seven-to-eight year capital programmes. The revenue models that justify them — AI cloud services, model licensing, enterprise software — are still maturing. The bet is that demand grows into the supply. History suggests that’s not guaranteed.

What This Means Beyond Big Tech

The consequences of this investment wave extend well beyond the companies writing the cheques.

For smaller businesses and startups, the infrastructure buildout is genuinely double-edged. On one hand, hyperscale investment drives down the cost of cloud compute over time — more supply, more competition, lower prices. Access to frontier AI capabilities through APIs and cloud platforms becomes cheaper and more reliable as infrastructure matures. That’s a real democratising force.

On the other hand, the concentration of this infrastructure among a handful of players creates deep dependencies. If your product runs on Azure AI or AWS Trainium, your cost structure, reliability, and ultimately your competitive position are tied to decisions made in Redmond or Seattle boardrooms.

The ripple effects touch virtually every industry. Healthcare systems training diagnostic models, financial institutions running real-time risk analysis, logistics companies optimising supply chains — all of them depend on the infrastructure being built right now. The quality, accessibility, and pricing of that infrastructure will shape what’s possible for an entire generation of applications.

This isn’t just a tech story. It’s the story of who controls the foundational layer of the next economy — and on what terms everyone else gets access to it.

The Bet of the Century

The question was never really whether AI infrastructure would reshape the world. That ship has sailed — the concrete is being poured, the chips are being installed, the gigawatts are being contracted. The only question that remains is how fast, and who controls the layers everything else gets built on top of.

$650 billion is an answer of sorts. It’s the collective conviction of the most capitalised companies in human history, expressed not in words but in data centers, silicon, and land.

They could be wrong. The history of technology is littered with expensive certainties that didn’t pan out.

But if they’re right — even partially right — we’re watching the foundation of the next economy being laid in real time.

That’s worth paying attention to.

Enjoyed this piece? Explore our full coverage of the AI infrastructure race — or subscribe for weekly analysis on the technologies reshaping business and society.

STRUCTURAL NOTES FOR PRODUCTION

| Element | Detail |

| Total Word Count | ~1,750 words ✓ |

| Tone Achieved | GQ meets The Guardian — authoritative, readable, opinionated |

| Key Visual | Investment table embedded — Section 3 |

| Hero Stat | $650B leads Section 4 contextualisation |

| Reading Level | Accessible throughout — no assumed technical knowledge |

13 Primary Sources

| **# ** | Anchor Placement | Source |

| 1 | Section 3a — Stargate supercomputer | Microsoft-OpenAI Stargate |

| 2 | Section 3a — Microsoft AI strategy | Microsoft AI Strategy Deconstructed |

| 3 | Section 3a — OpenAI partnership | Next chapter of Microsoft-OpenAI |

| 4 | Section 3b — AWS $200B | AWS $200B AI infra by 2026 |

| 5 | Section 3b — Amazon capex | Amazon spends year of AWS revenue on AI |

| 6 | Section 3c — Google $100B+ | Google $100B+ AI spend |

| 7 | Section 3c — DeepMind CEO | DeepMind CEO on $100B AI |

| 8 | Section 3d — Meta GPU deployment | Meta $65B AI, 2GW data center |

| 9 | Section 3e — xAI $20B raise | xAI raises $20B for data centers |

| 10 | Section 3e — Colossus funding | xAI Colossus 2 $12B funding |

| 11 | Section 3f — Oracle Stargate | Oracle $500B AI expansion |

| 12 | Section 3f — Anthropic data centers | Anthropic $50B data centers |

| 13 | Section 4 — Nvidia capex outlook | Nvidia AI capex outlook |

FINAL PRODUCTION CHECKLIST

- Hero stat ($650B) appears in Section 1 opener

- Data table formatted and tested for mobile rendering

- All 13 source links live and verified before publish

- Meta description written (recommended: 155 characters max)

- Featured image sourced — suggest data center aerial or GPU close-up

- CTA linked to newsletter or related article

- Subheadings reviewed for SEO keyword alignment

- Internal links added to related posts on AI/tech

Frequently Asked Questions

1. Which company is investing the most in AI infrastructure right now?

Microsoft currently leads the pack in sheer commitment, with $80 billion deployed in 2025 and projections exceeding $150 billion in 2026 supercharged by its Stargate supercomputer partnership with OpenAI. Amazon runs a close second, with total AI infrastructure investment potentially reaching $200 billion by 2026. The honest answer, though, is that the gap between the top players is narrowing fast. This is a race with multiple horses in front.

2. Why are these companies spending so much money on AI infrastructure specifically?

Training and running advanced AI models demands extraordinary amounts of computing power far more than conventional software. Every new generation of AI model requires more chips, more energy, and more specialised hardware than the last. Companies that control that infrastructure control who gets access to AI capabilities, at what price, and on what terms. In a world where AI is expected to reshape entire industries, owning the foundational layer isn’t optional — it’s the whole game.

3. What is the Stargate project and why does it matter?

Stargate is a joint AI infrastructure initiative involving Microsoft, OpenAI, Oracle, and SoftBank, with a projected total investment of $500 billion. At its core, it’s an effort to build the most powerful AI supercomputing infrastructure ever constructed — spanning data centers, chips, and cloud distribution networks across the United States. It matters because its scale is genuinely unprecedented, and because it signals that AI infrastructure is now being treated as national-level strategic priority, not just a corporate technology investment.

4. What are the biggest risks associated with this level of AI spending?

Three risks stand out. First, overcapacity if collective supply outpaces real-world demand, the industry faces painful corrections and stranded assets at enormous scale. Second, energy and environmental constraints — gigawatt-scale power consumption is already straining grids and attracting regulatory attention. Third, geopolitical vulnerability — the overwhelming dependence on Nvidia chips manufactured in Taiwan represents a supply chain concentration that no amount of capital can fully insulate against. The companies spending this money are aware of all three. Whether their mitigation strategies are sufficient remains an open question.

5. How will this AI infrastructure investment affect everyday businesses and consumers?

Over time, competition among hyperscalers should drive down the cost of accessing AI-powered cloud services making advanced capabilities more affordable for businesses of all sizes. For consumers, it means faster, more capable AI tools embedded in the products and services they already use. The longer-term concern is concentration: as infrastructure consolidates around a small number of dominant players, smaller companies and developers become increasingly dependent on the terms those players set. The buildout democratises access in the short term — but the power dynamics it creates are worth watching closely.