AI Replacing Jobs in 2026: Meta, Nvidia, Google & Apple’s Massive Shift Explained

The Year Work Changed Overnight

The headlines arrived like body blows, one after another, relentless.

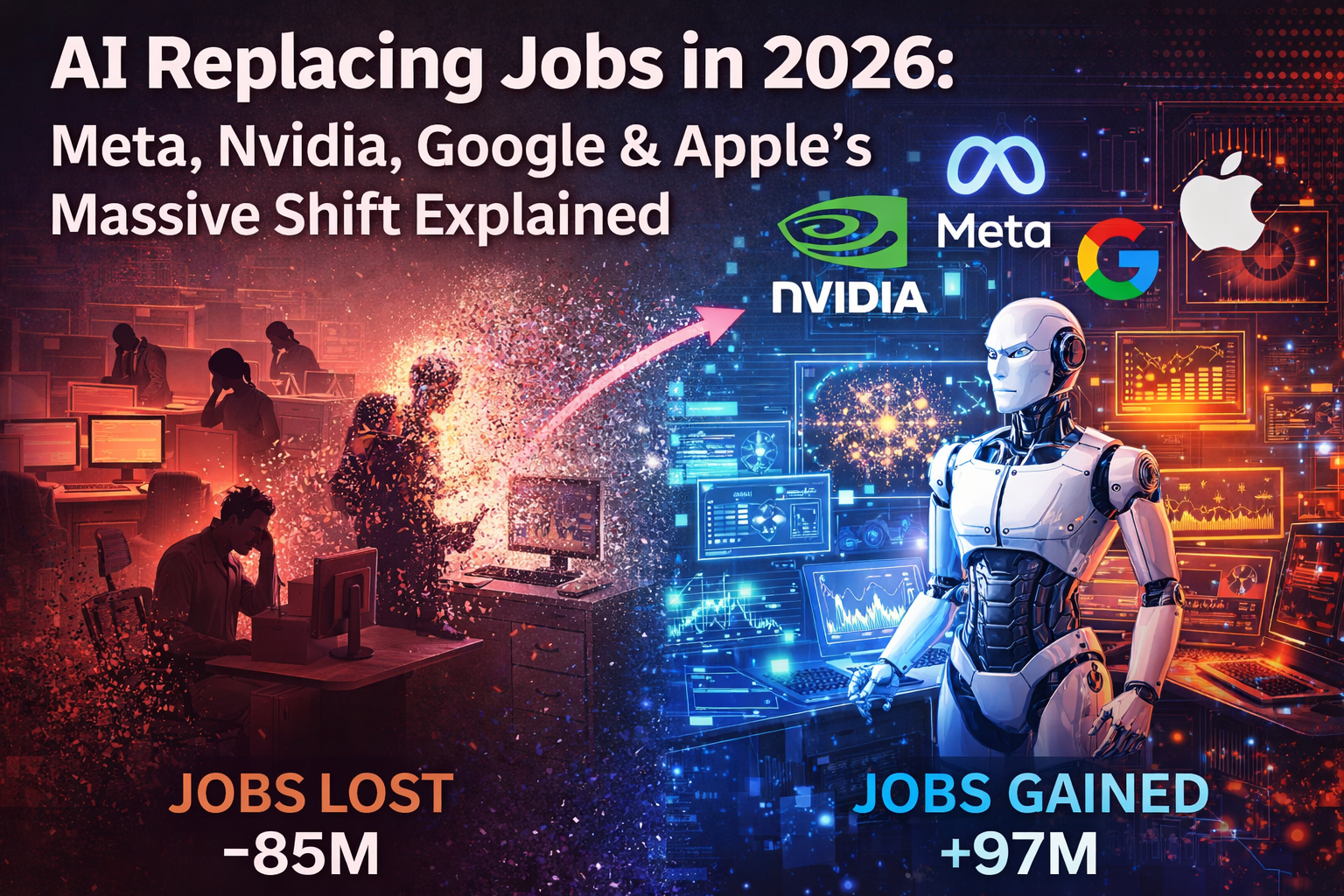

Meta cuts 15,800 jobs. Scroll. Google axes design teams. Scroll. Apple announces 20,000 new hires. Scroll. 55,775 tech workers lose their jobs in a single quarter. Scroll. AI creates 1.3 million new roles globally. Scroll. Scroll. Scroll.

At some point in early 2026, you had to stop and ask what is actually happening?

Because the story didn’t fit a single, clean narrative. It wasn’t the robot apocalypse the doomsayers promised. It wasn’t the frictionless AI utopia the optimists sold us either. It was messier, faster, and far more complicated than either camp had the honesty to admit. Hiring and firing were happening simultaneously, sometimes at the same company, sometimes in the same month. The economy of work wasn’t collapsing — it was mutating, in real time, right in front of us.

Here’s the number that stopped a lot of people cold: 37% of companies globally are planning to replace workers with AI by the end of 2026. Not automate alongside workers. Not augment their output. Replace. That figure, sitting inside a quietly devastating HR report, tells you everything about the mood in boardrooms right now — and very little about the full picture. Because that same landscape is producing entirely new categories of jobs that didn’t exist five years ago, paying salaries that would have seemed absurd a decade back.

So which is it? Mass displacement or unprecedented opportunity?

The honest answer — the one no one in a LinkedIn post will give you — is both. Simultaneously. Depending entirely on who you are, what you do, and how fast you’re paying attention.

This is the story of how four of the most powerful companies on earth are navigating that contradiction. Each one differently. Each one revealing something the others won’t say out loud. Together, they form a map of where work is going — and a warning about what gets left behind when the destination changes this quickly.

The year work changed overnight wasn’t a prediction anymore.

It’s already here.

The Big Shift: From Human Labor to AI-Augmented Work

Let’s be precise about what’s actually happening because the word “replacement” is doing a lot of heavy lifting right now, and it’s not always earning it.

When a company says it’s using AI to “improve efficiency,” it usually means one of two very different things. The first is automation — a machine performing a task a human used to do, faster, cheaper, and without a salary. The second is augmentation — a human performing a task with AI assistance, producing more output, with less friction, in less time. Both are real. Both are accelerating. But they are not the same thing, and collapsing them into a single headline is how we end up either catastrophizing or cheerleading when neither response is useful.

The roles feeling the sharpest edge of pure automation right now are, broadly speaking, the ones built around repetition. Data entry. Quality assurance. Tier-one customer support. Basic content moderation. These are not low-value jobs to the people who hold them — but they are, structurally, exactly the kind of tasks that machine learning eats for breakfast. Pattern recognition. Rule-based decision trees. High volume, low variance. The formula for automation has always been: if a human can be trained to do it consistently, a model can eventually be trained to do it better. In 2026, “eventually” has become “now.”

But here’s what the replacement narrative consistently misses.

The same AI systems eliminating those roles are simultaneously creating demand for something far more interesting — people who can work with the machine rather than be substituted by it. Prompt engineers who know how to extract precision from a model’s latent capabilities. MLOps specialists who keep AI infrastructure from quietly drifting off the rails. AI ethicists with enough technical fluency to push back when a system produces outcomes a company can’t defend in public. Domain-specific machine learning experts who understand not just the model, but the industry the model is operating in. These roles don’t just exist — they’re underpaid for their demand, and companies are quietly desperate for people who can fill them.

What we’re witnessing, then, is less a firing and more a resorting. The nature of the workforce isn’t shrinking it’s being reorganized around a new axis. The old axis was task volume: the more complex tasks you could handle, the more valuable you were. The new axis is AI fluency: the more effectively you can direct, evaluate, and collaborate with AI systems, the more indispensable you become.

This shift is happening everywhere. But nowhere is it more visible, more dramatic, and more instructive than at the top of the tech industry where the budgets are largest, the moves are boldest, and the stakes are high enough that nobody can afford to get this wrong.

Meta, Nvidia, Google, Apple. Four giants. Four distinct playbooks. One shared reality.

The future of work is being written in their decisions right now. Let’s read what they’re actually saying.

Meta’s AI Pivot: Cutting Thousands to Build Intelligence

Mark Zuckerberg has never been a man who does things quietly.

In January 2026, he didn’t announce a restructuring. He announced a reckoning. Up to 15,800 Meta employees, roughly 20% of the entire company’s workforce, were told their roles no longer fit the organisation Meta was becoming. It was the largest internal upheaval the company had seen since 2022, and it landed with the kind of institutional force that sends shockwaves far beyond the people directly affected. This wasn’t trimming fat. This was a surgical removal of entire layers of the company’s operating anatomy.

The question everyone wanted answered was simple: why?

The answer Zuckerberg gave was equally simple, delivered with the calm certainty of someone who has already decided what chapter comes next. Artificial intelligence, he said, would “dramatically” reshape how Meta operates. Not gradually. Not eventually. Dramatically. The word choice was deliberate. This was a CEO telling his company, his investors, and frankly the entire industry that the old model of scaling human headcount alongside revenue growth was finished. Something had replaced it. And that something was being funded to the tune of $115 to $135 billion in AI capital expenditure for 2026 alone.

Read that number again. Let it sit.

That figure isn’t a research budget. It isn’t a speculative moonshot allocation tucked away in a footnote. It is the single largest infrastructure commitment in Meta’s history, pointed squarely at AI development, AI hardware, and AI capability at a scale that most sovereign nations couldn’t match. It represents a fundamental answer to the question of what Meta believes it is building toward. Not better social media. Not a more refined version of Instagram. Something larger, more autonomous, and considerably more powerful.

To understand where the cuts landed, you have to understand how Zuckerberg thinks about organisational efficiency. Middle management has always been, in the language of tech leadership, a “coordination layer.” Its job is to translate strategy into execution, to move information between teams, to resolve friction before it becomes failure. It is, in other words, exactly the kind of function that an AI agent can perform. Not partially. Not approximately. Comprehensively. When a model can synthesise updates across forty workstreams, flag bottlenecks in real time, and route decisions to the right person without a single calendar invite, the case for keeping that coordination layer human becomes genuinely difficult to make. Meta stopped trying to make it.

Reality Labs took a particularly sharp hit, with over 1,500 roles cut in the early months of 2026. This was, on the surface, the most surprising move. The metaverse was supposed to be Zuckerberg’s legacy project, the long bet that would outlast every cycle of criticism and every quarterly miss. But what actually happened was more nuanced than a strategic retreat. The staffing of Reality Labs was rationalised while the research direction quietly pivoted toward AI integration within mixed reality rather than the immersive social world that never quite arrived. The headcount shrank. The ambition, characteristically, did not.

Operations across the company followed the same logic. Customer support workflows, internal communications infrastructure, process coordination across global teams — anywhere that an AI agent could take over a repeatable, structured task, it was given the handoff. The human beings who previously owned those workflows were, in many cases, handed their notice instead.

What makes Meta’s approach distinct from simple cost-cutting dressed up in AI language is where the money went after the layoffs. This is not a company that cut 15,800 jobs and quietly distributed the savings to shareholders. The investment thesis is visible, specific, and aggressive. Meta has been recruiting directly from Scale AI, one of the most respected AI data and training organisations in the world, pulling in engineers and researchers who understand not just how to deploy AI systems but how to build the foundational layers underneath them. New AI labs have been stood up. Superintelligence research, once the exclusive territory of OpenAI and DeepMind, is now an explicit part of Meta’s stated ambition.

Zuckerberg’s version of the future is one where AI agents don’t assist human workers so much as operate alongside them as functional colleagues, handling the coordination, the administration, the support infrastructure that makes a large organisation run. In that version, you need far fewer humans managing process and far more humans managing intelligence. The 15,800 people who lost their jobs were, in his framework, casualties of a structural obsolescence rather than a financial shortfall. Meta wasn’t struggling. It was choosing.

That distinction matters. Because it tells you this wasn’t a company reacting to bad numbers. It was a company making a calculated bet that the organisations who move fastest toward AI-first operations will own the next decade of the internet. The severance packages were generous. The vision was unambiguous. And the message to every other tech company watching was impossible to misread.

The age of scaling humans is over.

The age of scaling intelligence has begun.

Nvidia’s Counter Narrative: AI Doesn’t Kill Jobs — It Creates Them

While the rest of Silicon Valley was signing severance paperwork, Jensen Huang was doing something considerably more unusual.

He was hiring.

In an industry where 2026 became synonymous with workforce reduction, where the word “restructuring” arrived in inboxes with the regularity of a bad season, Nvidia stood apart with a quietness that was almost provocative. No mass layoffs. No dramatic announcements about AI replacing coordination layers. No carefully worded press releases explaining why fifteen thousand people were no longer a strategic fit. Just a company growing into the demand that everyone else was generating by spending billions on the hardware only Nvidia could supply.

The contrast wasn’t subtle. It was architectural.

But Jensen Huang didn’t just let the numbers speak for themselves. He went further. When asked about the wave of AI-linked job losses sweeping through tech, his response landed somewhere between philosophical rebuttal and outright dismissal. Companies blaming AI for workforce cuts, he argued, were suffering not from technological disruption but from something far less flattering: a lack of imagination.

It’s a sharp thing to say. And it’s worth unpacking rather than simply quoting, because the argument underneath it is more serious than the provocation suggests.

Huang’s position is that the fear of AI as a job destroyer fundamentally misunderstands what AI actually does to an economy. Every major technological shift in history, from electrification to the internet, generated the same anxiety. And every time, the anxiety turned out to be structurally correct in the short term and dramatically wrong in the long term. Jobs in one category disappeared. Jobs in five new categories emerged to replace them, often paying better, often requiring more interesting work, often at a scale nobody had predicted. The people who couldn’t see those new categories coming weren’t victims of technology. They were, in Huang’s framing, victims of insufficient vision.

What he sees coming this time is physical AI. And the numbers he attaches to it are not modest.

Physical AI, in Nvidia’s vocabulary, refers to AI systems that operate in the real world rather than inside a screen. Robotics. Autonomous systems. Machines that perceive their environment, make decisions in real time, and perform tasks that previously required a human body in a specific location. Manufacturing floors. Warehouses. Surgical suites. Construction sites. Agricultural operations. The entire vast economy of physical labour that the first wave of AI, the language models and image generators and customer service bots, never touched.

Huang believes this is a trillion-dollar market in the making. Not a distant, theoretical trillion dollars. A near-term, buildable, infrastructure-backed trillion dollars that Nvidia intends to sit at the center of. Its robotics platforms, its simulation environments for training physical AI systems, its GPU architectures designed for real-time spatial reasoning are not side projects. They are the next chapter of the company’s core business, written in the language of a world where machines move through physical space as fluidly as they currently move through data.

And here is where Huang’s job creation argument becomes concrete rather than rhetorical.

Physical AI doesn’t eliminate the need for human intelligence. It redirects it. Every autonomous system deployed in a factory needs someone to oversee it, to handle edge cases the model wasn’t trained for, to make judgment calls that fall outside the statistical distribution of the training data. Every robotics operation at scale needs management infrastructure, workflow design, safety auditing, ethical oversight. Every human-AI collaboration in a physical environment needs people who understand both sides of that collaboration well enough to make it work without incident.

These are not the jobs of today rebranded with an AI prefix. They are genuinely new roles, requiring genuinely new skill combinations, emerging from a genuinely new category of economic activity. Oversight specialists. Human-AI workflow architects. Physical AI deployment managers. Robotics operations leads with domain expertise in specific industries. The job titles don’t exist yet in their final form. The demand for the underlying capabilities is already real and accelerating.

There is, of course, a layer to Nvidia’s position that deserves honest acknowledgment. The company is not a neutral observer of the AI economy. It is perhaps the single most commercially invested participant in its expansion. Every GPU sold to Meta to build AI agents is Nvidia revenue. Every dollar Google spends on Gemini infrastructure runs, in significant part, on Nvidia silicon. Every robotics startup building physical AI systems needs Nvidia’s platforms to train and deploy them. When Jensen Huang argues that AI creates rather than destroys jobs, he is also, inevitably, arguing for the continued and accelerating adoption of the technology his company provides.

That doesn’t make him wrong. It just means the argument should be read with full awareness of the speaker’s position in the ecosystem.

What it does make him, unambiguously, is the most interesting contrarian voice in a conversation dominated by anxiety. While competitors were cutting to fund AI investment, Nvidia was demonstrating that a different relationship between the two was possible. You could build the infrastructure layer of an AI-driven economy and grow your workforce doing it. You could believe in physical AI as a job creator rather than a job eliminator and bet the company’s direction on that belief.

And crucially, you could watch everyone else spend hundreds of billions of dollars building on top of your technology, and understand that the real power in this shift isn’t who gets replaced.

It’s who built the foundation they’re all standing on.

Google’s Quiet Restructuring: Efficiency in the Age of AI

Google doesn’t announce things the way Meta does.

There is no all-hands energy, no Zuckerberg-style declaration of civilisational intent, no singular number large enough to dominate a news cycle for a week. What Google does instead is something considerably harder to write about and considerably easier to miss: it moves incrementally, consistently, and with the institutional patience of a company that has been the most powerful force in consumer technology for two decades and sees no particular reason to perform urgency for an audience.

But make no mistake. Google is restructuring. It is just doing it quietly enough that by the time most people notice, the architecture of the company will already look fundamentally different.

The most visible data point from 2026 is the one that landed with a specific, human texture that abstract layoff numbers rarely carry. Over a hundred design roles, gone. Not engineering. Not product management. Not the categories tech coverage instinctively reaches for when it talks about AI displacement. Design. The people responsible for how Google’s products feel, how they communicate visually, how the interface between a billion users and an extraordinarily complex system gets translated into something navigable and intuitive. When a company starts cutting designers, it is telling you something specific about what it believes AI can now do, and what it has decided it no longer needs humans to do for it.

The cuts didn’t stop at design. Search teams, advertising infrastructure, and the management layers sitting between strategic leadership and execution have all been subject to ongoing streamlining throughout 2026. The language Google uses is careful and consistent: efficiency, focus, prioritisation. These are words chosen for their capacity to frame reduction as intentionality. Fewer people doing more targeted work toward more specific goals. The corporate vocabulary of optimisation, applied to human beings.

At the centre of this reorientation is Gemini. Google’s flagship AI model series has become the axis around which the entire company is reorganising itself. Infrastructure investment to support Gemini’s development and deployment is substantial, ongoing, and clearly the destination that the efficiency savings are funding. The logic is visible even if it is rarely stated directly: reduce headcount in the areas where AI can absorb the workload, redeploy capital into the AI infrastructure that makes that absorption possible, and emerge on the other side of the transition as a leaner, more computationally powerful organisation than the one that went in.

Which is precisely where the critics start reaching for a phrase that has become one of the more useful pieces of vocabulary in 2026’s technology discourse: AI-washing.

AI-washing, in its clearest definition, is the practice of framing ordinary corporate cost-cutting as AI-driven transformation. It is the difference between a company that is genuinely restructuring its operations around new technological capabilities and a company that is using AI as a narrative vehicle to justify workforce reductions it would have pursued regardless. The distinction matters enormously for employees, for investors, and for anyone trying to understand whether the changes happening at a given company represent genuine strategic evolution or financial engineering dressed in a Silicon Valley accent.

The case for applying that label to Google is not without merit. The company’s 2026 cuts are, individually, smaller than Meta’s seismic 20% reduction. They are diffuse rather than concentrated, spread across departments and quarters rather than delivered in a single structural announcement. Critics argue that this diffusion is precisely what allows the AI-washing mechanism to work most effectively: each individual cut is explicable as an efficiency measure, each individual team reduction is defensible as a strategic prioritisation, and the cumulative picture of a company significantly reducing its human workforce while simultaneously telling the world it is investing in AI never has to be confronted in its totality.

The counter-argument is that Google’s investments in AI infrastructure are substantively real, technically significant, and not merely rhetorical. Gemini is not a press release. The computational infrastructure being built to support its development represents genuine capital allocation toward genuine capability. The roles being created in AI ethics, model oversight, and cross-functional AI collaboration are not window dressing. They reflect a real and growing institutional need for people who can navigate the increasingly complex relationship between AI systems and the humans responsible for them.

Those emerging roles deserve particular attention, because they represent something important about where skilled work is actually going inside a company like Google. AI ethics specialists, sitting at the intersection of technical understanding and social accountability, are becoming some of the most genuinely influential people in large tech organisations. Their work is not peripheral. When a Gemini output creates a reputational problem, when an automated ad system produces results that no human would have approved, when a search algorithm update creates unintended consequences at planetary scale, these are the people who have to have answers. The demand for that kind of combined fluency, technical enough to understand the system, humanistic enough to evaluate its impact, is accelerating faster than universities can produce it.

Cross-functional AI collaboration is the other growth area that Google’s restructuring quietly points toward. As AI systems become integrated into search, into advertising, into the core products that generate Google’s revenue, the people who can work fluidly across the boundaries between model development, product design, business strategy, and user research become disproportionately valuable. These are not narrow specialists. They are integrators, people who speak enough of every relevant language to make the connections that siloed expertise misses.

What Google’s 2026 story ultimately reveals, stripped of both the AI-washing critique and the corporate efficiency framing, is a company in genuine transition that hasn’t yet decided how transparent it wants to be about the full nature of that transition. The cuts are real. The investment is real. The emerging roles are real. The discomfort of holding all three of those facts simultaneously, without collapsing them into a single clean narrative, is also real.

Google built its empire on organising the world’s information.

Right now, it is quietly in the business of reorganising itself.

Apple’s Different Play: Hiring Instead of Cutting

In a year defined by the sound of doors closing, Apple opened more of them.

While the rest of the industry was generating headlines about severance packages and restructuring announcements, Apple arrived at 2026 with a posture so fundamentally different from its peers that it almost read as a deliberate provocation. No mass layoffs. No efficiency narratives carefully constructed to make workforce reduction sound like strategic genius. No AI-washing, no coordination layer eliminations, no quiet trimming of departments that had become inconvenient in the age of agents. Instead, a company that has spent decades operating on its own timeline, by its own logic, doing precisely what Apple has always done when the rest of the industry zigs.

It zagged. Emphatically. Expensively. And with the kind of long-term conviction that only a company sitting on Apple’s balance sheet can afford to project.

The number at the centre of Apple’s 2026 story is $500 billion. That is the scale of the company’s commitment to American investment over the coming years, a figure so large it operates more like a statement of intent than a budget line. It is Apple telling the world, in the clearest financial language available, that it believes the next era of technology will be built by people, not just by models. That talent, carefully acquired and strategically deployed, remains the irreducible competitive advantage that no amount of AI infrastructure can fully substitute.

Within that broader commitment, the job creation numbers are specific and significant. 20,000 new roles across the United States over a four year window, with a substantial proportion of those positions materialising by 2026. These are not retail jobs or support roles padded into a headline figure for political optics. They are concentrated in the areas that define Apple’s actual competitive edge in the AI era: artificial intelligence research and development, machine learning engineering, silicon design, and the specialised hardware architecture that makes Apple’s devices perform the way they do. The kind of work that requires years of expertise to do well and cannot be easily replicated by a model trained on publicly available data.

The infrastructure dimension of this expansion is equally telling. Apple’s new Texas AI server facility represents a physical commitment to the AI race that goes beyond hiring announcements and press releases. Server infrastructure at the scale Apple is building is not a short term asset. It is a decade-long bet on the direction of consumer AI, on the belief that the future of intelligence is not purely cloud-based but intimately connected to the device in your pocket and on your wrist. Apple’s entire product philosophy has always centred on the integration of hardware and software in ways competitors struggle to match. The Texas facility is that philosophy extended into the AI era, a purpose-built environment designed to give Apple’s models the computational foundation they need to operate at the level Apple’s users will eventually demand.

Leadership tells the story of internal urgency more honestly than any press release. The decision to replace Apple’s AI chief mid-cycle was not a minor administrative change. It was a signal, read immediately and correctly by anyone watching the company closely, that the pace of AI development at Apple was being formally escalated. The new direction points toward something Apple had been notably cautious about for years: genuine, substantive upgrades to Siri. The version of Siri that existed before 2026 was, by common consensus including Apple’s own implicit acknowledgment, not competitive with what Google, OpenAI, and Amazon had built. The integration of Google Gemini into Apple’s ecosystem, a partnership that would have seemed architecturally impossible to imagine even three years ago, represents the most pragmatic possible answer to that gap. Apple, the company most famous for building everything in-house, decided that winning the AI assistant race mattered more than winning it with exclusively proprietary technology.

The wearables dimension of this competition deserves specific attention because it points toward where Apple’s AI ambitions become most concretely personal. The device you wear on your wrist, the earphones sitting in your ears throughout your day, the glasses that may sit on your face within the next product cycle: these are the surfaces where AI stops being a feature and starts being an experience. Apple understands, perhaps better than any company in the world, that the battle for AI relevance in the consumer market will ultimately be decided not in data centres or model benchmarks but in the intimacy of the human body’s relationship with its devices. The hiring surge, the Gemini integration, the Siri upgrades, the silicon engineering investment: all of it points toward that single competitive thesis.

What makes Apple’s approach genuinely instructive for the broader workforce conversation is not simply that it is hiring while others cut. It is why it is hiring while others cut. The implicit argument Apple is making, with $500 billion and 20,000 jobs as its evidence, is that the companies which treat AI as a replacement mechanism are solving for the wrong problem. The real competitive advantage in the AI era is not how efficiently you can reduce your human headcount. It is how effectively you can combine human expertise with AI capability to build products that neither could produce alone. The silicon engineers who design Apple’s neural processing units are not competing with AI. They are the reason Apple’s AI works as well as it does. The machine learning researchers refining on-device models are not at risk of being replaced by the systems they are building. They are the irreplaceable intelligence behind those systems.

This is a fundamentally different theory of the relationship between human talent and artificial intelligence than the one Meta’s restructuring implies. It is not necessarily a more correct theory. The results of these competing philosophies will take years to fully evaluate. But it is a more hopeful one, and in 2026, hope backed by half a trillion dollars and twenty thousand job offers is worth taking seriously.

Apple has always believed it competes differently.

In the year everyone else started cutting to build intelligence, Apple decided the most intelligent move was to keep building through people.

So far, nothing about Apple’s track record suggests that bet was wrong.

The Numbers Behind the Shift: What the Data Really Says

At some point, the anecdotes have to give way to arithmetic.

Meta’s announcements and Jensen Huang’s philosophy and Apple’s hiring surge are all compelling data points in their own right. But they are also, at the level of individual companies, subject to individual interpretations. What a single CEO says about AI and employment tells you about that CEO. What the aggregate data says about AI and employment tells you about the world. And the world, as it turns out, is carrying a set of numbers in 2026 that deserve to be read with considerably more care than the headlines typically afford them.

Start with the number that keeps appearing in boardroom conversations and HR strategy documents with the kind of frequency that suggests it has genuinely unsettled the people who commission these reports. 37% of companies globally are planning to replace workers with AI by the end of 2026. Not experiment. Not pilot. Not explore the possibility of automation in select workflows. Replace. More than a third of the corporate world has looked at its human workforce and identified a category of roles it intends to eliminate in favour of automated systems within a twelve month window. That is not a marginal finding. That is a structural signal about where the centre of gravity in workforce planning has moved.

Then there is the quarterly data, which translates the strategic into the viscerally concrete. 55,775 tech workers lost their jobs in the first quarter of 2026 alone. A single quarter. Roughly ninety days. The pace of that number is as significant as the number itself. Layoffs at that velocity, sustained across a quarter, represent not a correction or a one-time restructuring event but a systematic, ongoing process of workforce reduction that shows no signs of decelerating. The tech industry, which spent the better part of two decades being the economic sector most people looked to for employment stability and upward mobility, is now generating job losses at a rate that would have seemed extraordinary even five years ago.

Of those 55,775 losses, 20% were explicitly linked to AI. That fraction is important to hold precisely, because it cuts against two convenient but inaccurate readings of the moment. The first inaccuracy is the claim that AI is responsible for all of it, that every layoff in tech in 2026 is an AI story. It is not. Macroeconomic pressures, post-pandemic hiring overcorrections, interest rate environments and their impact on tech valuations, the natural contraction that follows years of exuberant overstaffing: these forces are real and they are operating simultaneously. The second inaccuracy is the dismissal of AI as a meaningful driver, the argument that the AI-job story is media hysteria and the actual numbers don’t support it. One in five layoffs explicitly attributed to AI, in a sector of 55,000 cuts in a single quarter, is not a rounding error. It is a trend with momentum.

And yet the picture is not, if you are willing to look at all of it rather than the most alarming portion, one of unambiguous contraction.

Because on the other side of this ledger sits a number that the layoff stories consistently fail to lead with. AI is creating 1.3 million new jobs globally. Not eventually. Not theoretically. Now, in 2026, in the same economic moment that is generating the severance packages and the restructuring announcements. MLOps engineers maintaining and optimising AI systems at scale. Prompt engineers extracting precision from large language models with the kind of craft that the early dismissal of that job title completely failed to anticipate. AI ethicists sitting inside organisations large enough to understand that deploying powerful AI systems without human accountability frameworks is a liability they can no longer afford. Domain-specific machine learning specialists who understand both the model and the industry it is operating within, a combination so rare that companies are paying extraordinary salaries to secure it.

The 1.3 million figure is real, meaningful, and genuinely cause for something other than despair about the trajectory of human employment in the AI era.

But here is the key insight that the data demands and that almost no one is saying clearly enough.

The imbalance between the roles disappearing and the roles emerging is not simply a numerical one. It is a skill gap of considerable magnitude, and that gap is where the real human cost of this transition is concentrated. The 55,775 people who lost tech jobs in Q1 2026 are not, for the most part, the same people positioned to step into the 1.3 million AI-linked roles being created. A data entry specialist who has spent a decade building expertise in a specific operational workflow does not automatically become an MLOps engineer because the market has decided MLOps engineers are valuable. A quality assurance analyst whose role was absorbed by automated testing infrastructure does not wake up the following Monday as a prompt engineer. The jobs being created require skills that take time, investment, and access to develop. The jobs being eliminated are concentrated among workers for whom that development pathway is least accessible.

This is the structural problem that the aggregate optimism of the 1.3 million figure obscures. The new jobs are real. The opportunity is genuine. But the people who need the opportunity most are frequently the furthest from being able to reach it, not because they lack capability but because the reskilling infrastructure required to bridge that gap has not been built at anything approaching the speed or scale the moment demands.

The numbers, read honestly, tell a story that is neither the catastrophe nor the triumph that competing narratives insist upon.

They tell the story of a transition happening faster than the systems designed to manage it can keep pace with.

And they tell it in a register that is impossible to argue with, because unlike headlines and CEO statements and strategic vision documents, numbers do not have a communications team.

They just say what they say.

Jobs at Risk vs Jobs on the Rise

Not all work is created equal in the eyes of a language model.

Some of it looks, to an AI system, like an extraordinarily straightforward problem. Structured inputs, predictable outputs, rule-based decision trees, high volume and low variance. The kind of work that a well-trained model can absorb almost without noticing. And some of it looks, to that same system, like something it fundamentally cannot do. Judgment under ambiguity. Creative synthesis across unfamiliar domains. Ethical reasoning in contexts where the training data provides no clean precedent. The kind of work that requires not just intelligence but situated intelligence, embedded in a specific human context that no model can fully replicate.

The workforce of 2026 is being sorted along exactly that line. What falls on one side of it and what falls on the other is not arbitrary, not political, and not particularly difficult to predict once you understand the underlying logic. Here is what the sorting actually looks like.

8.1 Roles Being Replaced

Data Entry

If there is a ground zero for AI-driven job displacement, this is it.

Data entry is, at its structural core, the human performance of a task that computers were theoretically designed to handle from the moment they were invented. Taking information from one format and transcribing it accurately into another. Reading a document and populating a database field. Extracting figures from an invoice and entering them into a system. The reason humans were doing it at scale for so long was not that it required human intelligence. It was that the systems capable of doing it automatically were, for most of the industry’s history, not reliable enough to trust without supervision.

That reliability threshold has now been crossed. Comprehensively. Optical character recognition combined with large language model interpretation can handle document processing tasks with an accuracy rate that matches or exceeds human performance on virtually every standard benchmark. The argument for keeping humans in that workflow, at scale, has evaporated. The displacement is not coming. In most large organisations, it has already arrived.

Quality Assurance

QA sits in a more nuanced position than data entry, but the trajectory is the same.

Routine quality assurance, the kind that involves checking outputs against a defined specification, flagging deviations from an established standard, running regression tests against known parameters, is exactly the pattern recognition task that machine learning handles with increasing authority. Automated testing frameworks have been maturing for years. What 2026 has added is the AI layer capable of handling the less structured elements of QA, the edge cases that previously required human judgment to evaluate, the contextual assessments that couldn’t be reduced to a simple pass or fail condition.

What remains, and what remains genuinely valuable, is the higher-order QA work. The tester who understands not just whether a product meets its specification but whether the specification itself is right. The analyst who can evaluate an AI system’s outputs for bias, for ethical compliance, for the kinds of failure modes that only become visible when you understand the social context the product operates within. That work is growing in importance and in compensation. The routine work underneath it is being automated out of existence.

Customer Support

The economics of AI-powered customer support became impossible to argue with sometime in 2025, and by 2026 the conversation had moved from whether to automate tier-one support to how far up the stack automation could reasonably go.

Tier-one support, the handling of common queries, standard account issues, predictable troubleshooting pathways, has been largely absorbed by AI agents at every major technology company. The speed advantage is decisive. The cost advantage is overwhelming. The customer satisfaction data, initially mixed, has improved steadily as the models handling these interactions have become more contextually fluent and more capable of recognising when a conversation has moved beyond their competence and needs a human in the loop.

That last clause matters. The human in the loop is not disappearing from customer support. The human is moving up the loop. Complex escalations, emotionally sensitive interactions, edge cases requiring genuine discretion and judgment: these remain human territory, and they represent the portion of customer support work that is not only surviving the automation wave but becoming more valued precisely because it is the work the model cannot do well.

Routine Operational Roles

The category that sits underneath the more specific ones and connects them is the broader class of routine operational work. Scheduling. Basic reporting. Internal coordination. Process monitoring. Workflow administration. The connective tissue of large organisations that keeps information moving between people and systems without strategic intent, without creative judgment, without the kind of contextual authority that makes a human presence genuinely necessary.

AI agents are handling this category with increasing comprehensiveness. Meta’s restructuring of its middle management layer is the most visible expression of this trend, but it is happening at every scale of organisation, in every sector, with a consistency that reflects not a particular company’s strategic choice but a fundamental shift in what human labour is actually needed for inside a complex institution.

8.2 Roles Being Created

Prompt Engineering

When the job title first appeared, it was widely dismissed. Getting paid to write instructions to a chatbot was the reductive version, repeated with the confidence of people who had not actually tried to do it at a level that produced consistent, production-grade results.

The dismissal has aged poorly.

Prompt engineering, at the level that makes a meaningful difference to an organisation’s AI output, is a genuine craft. It requires a deep understanding of how large language models process context, where their reasoning tends to degrade, how to structure instructions that produce reliable results across a distribution of inputs rather than just a single carefully chosen example. It requires domain knowledge in the field the model is being applied to, because a prompt that works brilliantly for a general query often fails entirely when the subject matter becomes specialised. It requires iteration, testing, and the kind of systematic thinking about failure modes that engineering disciplines have always demanded.

The organisations that have staffed this function seriously are producing AI outputs that are qualitatively different from those that treated it as a task anyone could pick up in an afternoon. The market has noticed. Compensation for strong prompt engineers at major technology companies reflects exactly the gap between how the role is publicly perceived and how much it is privately valued.

AI Ethics and Governance

If prompt engineering is the craft of making AI systems perform well, AI ethics and governance is the discipline of making them perform responsibly. And in 2026, the distance between those two objectives has become one of the most consequential fault lines in the technology industry.

The need for people who can evaluate AI systems for bias, for discriminatory outcomes, for the social and legal implications of automated decision making, has grown from a niche academic concern into an urgent institutional priority. Regulatory environments across Europe, the United States, and increasingly Asia are tightening. The reputational cost of an AI system that produces harmful outputs at scale is no longer theoretical. The companies that lack serious internal governance infrastructure are discovering this through the specific discomfort of public accountability moments they were not prepared for.

AI ethicists with genuine technical fluency are among the most sought after professionals in the current market. The combination is rare: deep enough technical understanding to engage meaningfully with model architecture and training methodology, paired with the philosophical and social science grounding to evaluate what those systems are actually doing to the humans they interact with. Developing both takes time. The demand for people who have both is already outpacing supply by a margin that shows no signs of closing.

MLOps and Machine Learning Engineering

The AI systems that major technology companies are deploying at scale do not maintain themselves. They drift. Their performance degrades as the data distribution they encounter in the real world diverges from the distribution they were trained on. They encounter edge cases their architects did not anticipate. They require monitoring, retraining, version management, infrastructure optimisation, and the kind of ongoing operational attention that any complex system deployed at production scale demands.

MLOps, the discipline of machine learning operations, exists to handle exactly this. It sits at the intersection of software engineering, data science, and systems operations, requiring fluency across all three to do well. The professionals who inhabit this space are responsible for the reliability and performance of AI systems that, in many organisations, are now critical infrastructure. When those systems fail, the consequences are immediate and measurable. The people who prevent those failures are compensated accordingly.

Machine learning engineers more broadly, the practitioners who design, build, and refine the models themselves, remain among the highest-demand and highest-compensation professionals in the global technology labour market. The pipeline of qualified talent has not kept pace with the explosion of organisational demand that 2026’s AI investment wave has generated. That gap is structural, persistent, and not going to be resolved quickly.

Domain-Specific AI Specialists

The final category is perhaps the most interesting, because it represents a genuinely new kind of professional that the AI era is creating rather than simply relabelling.

A domain-specific AI specialist is not primarily an AI expert who has learned about a particular industry. They are a domain expert, someone with deep, earned expertise in medicine, law, finance, agriculture, materials science, logistics, or any other complex field, who has developed sufficient AI fluency to deploy, evaluate, and direct AI systems within that domain effectively.

This combination is extraordinarily powerful and extraordinarily rare. A language model trained on medical literature can generate impressive-sounding clinical text. A doctor who understands what that model is actually doing, where it is reliable and where it is dangerously overconfident, and how to use it to augment clinical judgment rather than replace it, is something the model itself can never be. That expertise is irreplaceable. It is also, in 2026, worth more than it has ever been.

The same logic applies across every specialised domain. The professionals who understand both sides of the human-AI interface in a specific field are not competing with the AI systems in that field. They are the reason those systems can be trusted to operate within it.

The line between the roles disappearing and the roles emerging is not a line between the skilled and the unskilled, the educated and the undereducated, the fortunate and the left behind. It is a line between the replaceable and the irreplaceable. And in 2026, what makes a human being irreplaceable in their working life is shifting in ways that are real, urgent, and not yet fully understood by the systems, educational, institutional, governmental, that are supposed to prepare people for exactly this kind of transition.

The jobs are changing. The question is whether we are changing fast enough to meet them.

Who’s Most Vulnerable — and Who Wins

Here is the uncomfortable truth that the reskilling conversation consistently dances around without quite landing on.

The people most at risk from the AI-driven workforce transformation of 2026 are not the ones anyone expected. The popular imagination of AI displacement has always pictured it happening at the bottom of the economic ladder first. Low-wage, low-skill, easily automated roles disappearing while the professional classes watched from a safe distance, insulated by the complexity of their expertise and the salary premium that complexity commanded. That picture was always more comforting than accurate. And in 2026, its inaccuracy has become impossible to ignore.

The professionals carrying the most exposure right now are, in many cases, the ones earning the most.

A senior manager with fifteen years of experience coordinating teams, synthesising reports, translating executive strategy into operational execution, and managing the human friction that inevitably accumulates inside large organisations is not facing competition from a minimum wage worker being displaced by a self-checkout machine. They are facing competition from an AI agent that can do every structural element of their coordination function faster, cheaper, and without the interpersonal complexity that makes human management both valuable and expensive. The tasks that justified their compensation, the synthesis, the routing of information, the meeting-heavy choreography of keeping multiple teams aligned, are precisely the tasks that AI handles most fluently. The salary that reflected the scarcity of people who could perform those tasks reliably now looks, to a CFO staring at a $135 billion AI capex commitment, like an inefficiency waiting to be resolved.

This is the middle management squeeze, and it is the single most consequential and least publicly discussed dimension of the AI workforce story. Middle management is not one job. It is a category of organisational function that exists at every large company in every sector, representing millions of roles globally, most of them well-compensated, most of them built around exactly the coordination and synthesis capabilities that AI is rapidly absorbing. The people in those roles did not make poor career choices. They built genuine expertise in functions that were genuinely valuable. The problem is that the value of those functions has been structurally undermined by the same technological shift that is generating billions in AI investment at the very companies that employ them.

Meta’s 2026 restructuring is the clearest expression of this dynamic at scale. But it is not the only one. The management layer compression happening across Google, across mid-tier technology companies, across financial services organisations and consulting firms and media companies that have not made the front page with their restructuring announcements: it is the quiet story underneath the loud one, and it is affecting a demographic of workers who have the least institutional sympathy and the least organised advocacy in conversations about AI displacement.

The contrast with AI-literate workers could not be more pronounced.

The advantage shift toward professionals with genuine AI fluency is not marginal. It is not a modest premium for a nice-to-have skill that distinguishes otherwise equivalent candidates. It is a fundamental revaluation of what human labour is worth in a world where AI handles an expanding range of cognitive tasks. The MLOps engineer who keeps production AI systems performing reliably is not slightly more valuable than they were two years ago. They are categorically more valuable, operating in a market where demand has dramatically outpaced supply and shows no structural sign of correcting. The AI ethicist who can navigate a company through the regulatory and reputational implications of a problematic model output is not just useful. They are, in an environment of tightening AI governance requirements, essential. The domain specialist who combines deep field expertise with AI fluency is not competing with colleagues. They are operating in a different market entirely, one with fewer participants and considerably higher compensation.

What separates the vulnerable from the advantaged in this environment is not intelligence, not work ethic, not the quality of the career decisions someone made ten years ago. It is a specific kind of literacy that, until very recently, most educational and professional development systems did not treat as a priority. The ability to work with AI systems rather than simply alongside them. The understanding of what these tools can and cannot do, where their outputs can be trusted and where they require evaluation, how to direct them effectively toward specific goals rather than accepting whatever they produce as authoritative. This literacy is learnable. It is not the exclusive property of people with computer science degrees or machine learning research backgrounds. But it requires deliberate effort, structured learning, and access to resources that are not yet distributed equitably across the workforce.

Which is where reskilling becomes not a human resources talking point but a genuine social and economic priority.

The reskilling conversation has been happening for years at a comfortable level of abstraction. Companies announce reskilling programmes. Governments commission workforce transition reports. LinkedIn publishes data about the skills of the future. And meanwhile the gap between the rate at which roles are being displaced and the rate at which displaced workers are being equipped for the roles being created continues to widen, not because the problem is insoluble but because the investment in solving it has not matched the urgency of the situation.

The workers who will navigate this transition successfully are not necessarily the ones with the most existing credentials or the most senior positions. They are the ones who recognise what is happening early enough to respond to it, who access reskilling resources with the same seriousness they once brought to developing the expertise that made them valuable in their previous roles, and who understand that AI literacy is not a one-time credential to be acquired and filed but a continuously evolving practice that requires ongoing attention as the technology itself continues to evolve.

The workers who will struggle are not the ones who lack capability. They are the ones who wait for the system to come to them rather than going to the system. They are the ones who interpret the current moment as a temporary disruption that will stabilise back into familiar territory rather than a structural shift that has permanently changed the terrain. They are the ones who mistake seniority for security in an environment where the value of seniority has been partially decoupled from the functions that seniority used to exclusively perform.

There are no clean heroes and villains in this story. The technology is not malevolent. The companies deploying it are not operating outside the logic of the economic system they exist within. The workers being displaced are not casualties of their own complacency. What is happening is the collision of an extraordinarily powerful technological capability with an economic and institutional infrastructure that was not built to absorb change at this velocity.

Who wins in that collision is not predetermined.

It depends, more than most people are currently willing to acknowledge, on how quickly the individuals, institutions, and systems responsible for workforce development decide that the urgency of this moment deserves a response equal to its scale.

The technology is not waiting for that decision.

The Real Answer: Is AI Replacing Jobs or Reshaping Them?

We came here with a question. It deserves a straight answer.

Is AI replacing jobs in 2026?

Yes.

Is AI reshaping jobs in 2026?

Also yes.

These are not competing answers. They are not a politician’s both-sides evasion or a consultant’s way of billing twice for the same conclusion. They are the only honest response to a situation that is genuinely, structurally, simultaneously both things at once. The error that almost every commentary on this moment makes is the insistence on resolving the tension into a single narrative. The optimists need it to be a reshaping story because the alternative is too uncomfortable to build a LinkedIn post around. The pessimists need it to be a replacement story because nuance doesn’t generate the engagement that fear reliably does. Neither camp is describing the actual world.

The actual world looks like this.

For the worker whose role was built around repetition, around the faithful execution of structured tasks at high volume with low variance, the answer is replacement. Not eventually. Not theoretically. Now. The data entry specialist, the tier-one support agent, the QA analyst running regression tests against known parameters, the operational coordinator whose job was fundamentally the movement of information between people and systems without strategic judgment: these roles are being absorbed by AI systems that perform the same functions faster, cheaper, and without the overhead of human employment. The displacement is real, it is accelerating, and dressing it up in the language of transformation does nothing for the people experiencing it as straightforward job loss.

For the worker whose role requires creative synthesis, technical depth, strategic judgment, or the kind of domain expertise that takes years to build and cannot be extracted from a training dataset, the answer is expansion. Not the modest expansion of a marginal salary premium but the categorical expansion of a market that is creating demand faster than supply can meet it. The machine learning engineer, the AI ethicist, the domain specialist who understands both their field and the AI systems operating within it, the prompt engineer who treats model interaction as a genuine craft: these people are not competing with AI. They are the reason AI produces anything worth using. Their value in 2026 is higher than it has ever been, and the trajectory points further upward.

The uneven distribution of these two realities is where the human cost lives. And it is the part of the story that the aggregate optimism of job creation statistics most dangerously obscures.

Big Tech, in 2026, is the most instructive proof of this duality because it is where both dynamics are operating at maximum visibility and maximum velocity simultaneously. Look at what is actually happening across the four companies at the centre of this story and the simultaneity becomes impossible to deny.

Meta cuts 15,800 roles and commits $135 billion to AI in the same breath. The cuts and the investment are not separate decisions. They are the same decision, expressed in two different directions. Google streamlines design and management layers while building out AI ethics and Gemini infrastructure teams. Two organisational movements, opposite in direction, driven by the same underlying logic: automate what can be automated, invest aggressively in what cannot. Apple adds 20,000 jobs precisely because it has decided that the competitive advantage of the next decade lives in the combination of human expertise and AI capability that only serious talent acquisition can produce. Nvidia hires into a robotics and physical AI expansion that exists entirely because the rest of the industry is spending trillions on AI infrastructure that only Nvidia’s hardware can support.

Cuts and hiring. Simultaneously. At the same companies. In the same year.

This is not contradiction. This is the mechanism of the transition made visible.

What Big Tech is showing us, whether it intends to or not, is that the AI economy does not operate as a single tide that either lifts all boats or swamps them. It operates as a sorting function, moving resources from one category of human capability to another with a speed and efficiency that the social systems designed to manage workforce transitions were simply not built to handle. The people whose capabilities fall into the category the market is moving toward are experiencing an extraordinary moment of opportunity. The people whose capabilities fall into the category the market is moving away from are experiencing something considerably more difficult, with considerably less institutional support available to help them navigate it.

The answer to the question this blog was built around is therefore not a reassurance and not an alarm.

It is a challenge.

The jobs being replaced are, for the most part, already gone or in the process of going. That is not a reversible trajectory. The jobs being created are real, numerous, and in many cases accessible to people who are willing to invest seriously in developing the literacy the new economy demands. That accessibility is not guaranteed and it is not evenly distributed, but it exists in a way that makes the pessimist’s total displacement narrative as inaccurate as the optimist’s frictionless transition story.

What this moment actually requires is the least glamorous thing anyone writing about AI in 2026 wants to say.

It requires honesty about who is being affected and how. It requires investment in reskilling infrastructure at a scale commensurate with the disruption being generated. It requires organisations to accept that efficiency gains extracted from workforce reduction carry a social cost that balance sheets do not capture. It requires individuals to treat AI literacy not as an optional professional development activity but as the defining skill of the decade they are living through.

And it requires all of us, writers and readers and workers and leaders, to resist the comfort of the simple story.

Because the simple story, whichever version of it you prefer, is the one thing 2026 has definitively proven is no longer available.

The complex one, the real one, the one where replacement and reshaping are happening together and the outcome is still genuinely undecided: that is the story we are all inside right now.

The question is not whether AI is changing work.

The question is whether we are changing fast enough to change with it.

Final Take: The Workforce Isn’t Shrinking — It’s Mutating

There is a paradox sitting at the heart of everything this blog has examined, and it is worth naming directly before we close.

The same money funding the layoffs is funding the future.

Meta’s $135 billion AI commitment is not separate from its 15,800 job cuts. It is downstream of them. The severance packages and the superintelligence labs are entries in the same ledger, one column paying for the other, the reduction of one kind of workforce financing the construction of an entirely different one. Google’s design role eliminations are not a cost-cutting exercise that happens to coincide with Gemini investment. They are the mechanism by which Gemini gets funded. The workforce contraction and the technological expansion are not parallel stories running alongside each other. They are the same story, told from two different vantage points, by two different sets of people with very different experiences of the same institutional decision.

This is the paradox of 2026. Innovation is being built on disruption. The future is being funded by the present. And the people writing the cheques for what comes next are, in many cases, writing them with the savings generated by eliminating the jobs of people who had no vote in that transaction.

Understanding this is not the same as accepting it as inevitable or endorsing it as ethical. It is simply the prerequisite for seeing clearly what is actually happening rather than the version of it that is most convenient for any particular audience.

Because here is what the data, the company strategies, and the human stories of 2026 collectively establish beyond serious dispute.

The workforce is not shrinking. It is mutating.

The distinction is not semantic. A shrinking workforce means fewer people employed, less economic activity, a contraction in the total sum of human productive capacity. A mutating workforce means the form of employment is changing, the shape of valuable human capability is shifting, the architecture of what organisations need from people is being redesigned around a new technological reality. The total number of jobs globally is not collapsing. The composition of those jobs is being rebuilt from the inside out, at a pace that the institutions responsible for workforce development have not kept up with and are not currently on track to match.

The companies driving this mutation are not reducing their relationship with human talent. They are redesigning it. Apple’s 20,000 new hires are not a philanthropic gesture toward employment statistics. They are a strategic investment in the specific human capabilities that Apple’s competitive vision requires, capabilities that AI systems do not possess and cannot replicate. Nvidia’s expansion into physical AI and robotics is not a hedge against a future where AI takes all the jobs. It is a bet that the most valuable work of the next decade will sit precisely at the intersection of human expertise and machine capability, and that the people who inhabit that intersection will be worth more, not less, than their predecessors. Even Meta, the company whose 2026 restructuring most fits the displacement narrative, is not building a future without humans. It is building a future where the humans it employs are doing categorically different work than the humans it let go.

The redesign is real. The intention behind it, at every major technology company, is not to eliminate the human workforce. It is to eliminate the version of the workforce that predates AI and replace it with one that is built around it.

Which brings us to the real risk of this moment. And it is not the one most people are worried about.

The real risk is not being replaced.

It is being left behind.

Being replaced implies passivity, implies that something is being done to you by a force you have no relationship with and no agency over. Being left behind implies a different dynamic entirely. It implies movement, direction, a world that is going somewhere at a certain speed, and the very real possibility of losing pace with it not because you were pushed out but because the distance between where you are and where things are going became too great to close.

The workers who will struggle most in the AI economy of the next decade are not the ones whose roles were automated away in 2026. They are the ones who interpreted that automation as a temporary disruption, waited for the terrain to stabilise back into familiar shapes, and discovered too late that the terrain had permanently changed while they were waiting. The professionals who will find themselves genuinely, structurally marginalised are not the ones who lacked intelligence or capability or work ethic. They are the ones who treated AI literacy as someone else’s responsibility, as a technical specialism for engineers and researchers rather than the foundational fluency of a new era of work that belongs to everyone operating within it.

The reskilling window is not infinite. It is not closing tomorrow. But it is not standing open indefinitely either, and the people who move through it earliest will find themselves on the other side of a transition with considerably more options than those who move through it last.

So here, finally, is what 2026 is actually telling us about work.

It is not telling us that jobs are ending. The evidence does not support that story. It is not telling us that everything will be fine, that the market will sort itself out, that the transition will be smooth and the new roles will be accessible to everyone displaced by the old ones. The evidence does not support that story either.

What it is telling us is that the era of the predictable career is over.

The professional life that previous generations could map in advance, the clear progression from entry level to seniority within a stable and slowly evolving role definition, the expectation that the skills acquired in one decade would remain the relevant skills of the next, the assumption that institutional loyalty and accumulated expertise would provide a kind of employment security: that model of working life has been structurally undermined by a technological shift that rewards adaptability above all other professional qualities.

The careers that will thrive in the world that 2026 is building are not the ones most carefully planned. They are the ones most continuously updated. Not the ones most deeply invested in a single expertise. But the ones most fluently able to combine domain depth with AI literacy in configurations that the market has not yet fully priced. Not the ones most loyal to the version of work they were trained for. But the ones most willing to be retrained for the version of work that is actually arriving.

2026 is not the end of jobs.

It is the end of the assumption that yesterday’s preparation is sufficient for tomorrow’s economy.

The workforce is mutating. The only question that remains is whether you are mutating with it.

Sources & References

The reporting, statistics, and leadership insights referenced throughout this blog are drawn from the following verified sources. Each link corresponds directly to the claims made in the sections above.

Meta Layoffs & AI Investment

Meta Is Cutting 15,000 Jobs While Spending $135 Billion on AI A detailed breakdown of Meta’s 2026 restructuring, including the scale of workforce reduction, targeted departments, and the full scope of its AI capital expenditure commitment. https://letsdatascience.com/blog/meta-is-cutting-15-000-jobs-while-spending-135-billion-on-ai

Mark Zuckerberg Says AI Will Reshape Meta in 2026 and Change How Billions Use Its Apps Zuckerberg’s direct statements on AI-first operations, the strategic vision behind Meta’s restructuring, and the long-term role of AI agents within the company’s infrastructure. https://www.indiatvnews.com/technology/news/mark-zuckerberg-says-ai-will-reshape-meta-in-2026-and-change-how-billions-use-its-apps

AI Workforce Replacement Statistics

Companies Will Replace Workers With AI by 2026 The primary source for the 37% workforce replacement statistic, including industry-wide data on automation targets, roles most at risk, and projected timelines for AI-driven employment shifts. https://www.hrdive.com/news/companies-will-replace-workers-with-ai-by-2026/760729/

Nvidia Leadership Insights

Nvidia’s Jensen Huang Dismisses AI Job Loss Fears, Sees New Industries Being Born Jensen Huang’s full argument against mass AI displacement, his case for physical AI and robotics as a trillion-dollar opportunity, and Nvidia’s broader vision for human-AI collaboration in the workforce. https://enterpriseai.economictimes.indiatimes.com/news/industry/nvidias-jensen-huang-dismisses-ai-job-loss-fears-sees-new-industries-being-born

Apple Hiring and Investment Plans

Apple’s $500 Billion AI Investment to Create 20,000 Tech Jobs A comprehensive look at Apple’s domestic hiring surge, the breakdown of roles being created across AI research, machine learning, and silicon engineering, and the strategic context behind the Texas AI server facility. https://www.informationweek.com/it-leadership/apple-s-500-billion-ai-investment-to-create-20-000-tech-jobs

Google Restructuring Details

Google Layoffs Hit Over 100 Design Roles Amid AI Spending Shift Reporting on Google’s 2026 workforce restructuring, the specific departments affected, the AI-washing debate, and the company’s reallocation of resources toward Gemini model development and AI infrastructure. https://www.cloudcomputing-news.net/news/google-layoffs-hit-over-100-design-roles-amid-ai-spending-shift/

Frequently Asked Questions

Q1. Is AI actually replacing jobs in 2026 or is it just a media narrative?

Both the concern and the scepticism have merit, but neither tells the complete story. AI is genuinely replacing specific categories of work, particularly roles built around repetition, routine data processing, and structured task execution. At the same time it is creating entirely new job categories in AI ethics, machine learning engineering, prompt engineering, and domain-specific AI specialisation. The media tends to amplify whichever half of that story generates more engagement on a given news cycle. The honest answer is that replacement and creation are happening simultaneously, unevenly distributed across different industries, skill sets, and income levels, and the full picture requires holding both realities at once rather than choosing the more comfortable one.

Q2. Which jobs are genuinely at risk and which ones are safe?

Roles most exposed to AI displacement in 2026 are those defined by high volume, low variance, and structured repetition. Data entry, routine quality assurance, tier-one customer support, and middle management coordination functions sit at the highest risk end of that spectrum. Roles carrying the strongest protection are those requiring genuine creative judgment, deep domain expertise, ethical reasoning, or the kind of contextual human intelligence that AI systems cannot replicate reliably. The nuance worth noting is that seniority and salary alone do not provide the protection many professionals assume they do. A well-compensated middle manager whose primary function is information coordination is, in the current environment, considerably more exposed than a junior professional who has invested seriously in AI literacy and technical fluency.

Q3. Why are companies like Meta cutting thousands of jobs while simultaneously investing billions in AI?

Because those two decisions are not in tension with each other. They are the same decision expressed in two directions. The workforce reductions generate the financial headroom that funds the AI investment, and the AI investment is intended to produce capabilities that replace the functions those workforces previously performed. Meta’s $135 billion AI capital expenditure commitment and its 15,800 job cuts are entries in the same strategic ledger, one financing the other. This pattern is visible across Google and other major technology companies in 2026 and represents a deliberate corporate philosophy: reduce human headcount in areas where AI can absorb the function, redeploy that capital into AI infrastructure that accelerates the transition. It is efficient by the metrics companies are measured on. Its broader social costs are real and are not currently reflected in those metrics.

Q4. Is it too late to reskill for an AI-driven job market?

No. But the window for a comfortable, gradual transition is narrowing, and the people who move through it earliest will find considerably more options on the other side than those who wait for external pressure to force the decision. The most important thing to understand about AI reskilling in 2026 is that it does not require becoming a machine learning researcher or a software engineer. It requires developing genuine working fluency with AI tools in the specific domain you already operate within. A marketer who understands how to direct, evaluate, and refine AI-generated content is not competing with AI. A finance professional who can combine their domain expertise with AI analytical tools is categorically more valuable than one who cannot. The reskilling that matters most is not a career change. It is a capability expansion built on top of the expertise you already have.

Q5. Which of the four companies covered in this blog offers the best model for how organisations should handle the AI transition?

Each of the four approaches covered in this blog reflects a genuine strategic philosophy rather than a right or wrong answer, and the outcomes of those philosophies will take years to fully evaluate. Meta’s aggressive cut-and-invest model produces maximum short-term capital efficiency and maximum short-term human disruption. Nvidia’s expansion thesis positions it as the infrastructure layer everyone else depends on, a uniquely powerful position that is difficult to replicate. Google’s incremental restructuring allows it to manage the transition without a single seismic announcement while still fundamentally reshaping its workforce over time. Apple’s talent-first approach makes the strongest argument for the long-term competitive value of human expertise combined with AI capability. The model that proves most durable will likely be determined not by which company cut most aggressively or invested most boldly in 2026 but by which one built the most effective long-term relationship between human talent and artificial intelligence. That verdict is not yet in.

Disclaimer: This article is for informational purposes only. All statistics and references are sourced from publicly available third-party publications, with full credit belonging to their original authors. The views expressed represent independent editorial opinion and do not constitute professional, legal, or financial advice. This publication respects all individuals, professions, faiths, and communities without bias or prejudice. Information reflects data available at the time of publication and may be subject to change.